Organoid Computing: AGI Convergence

Part 2: AI is leaving us behind. We need a portfolio of bets.

Last week, 200,000 ‘brain cells in a dish’ learned to play the first-person shoot-em-up game Doom, and you can now access these cells via the web to run programmes on – via the ‘cortical cloud’ or ‘CL1’.

You code this ‘neural computer’ in Python. Talking about it as Cloud you code in Python makes the almost supernatural seem mundane. If you’re pressed for time, you should probably stop reading and just watch this 6-minute video produced by the team behind it at Cortical Labs. I challenge you not to be awe-struck:

Can you imagine watching or reading this if we hadn’t lived through the past 13 years since AlphaGoZero (which, even now, if you go back and read the Wiki summary is still dazzling) where AI breakthroughs are so routine that they rarely make headlines, and have become the background noise to life?

Also this week, Eonsys claim to have copied a biological brain, neuron-by-neuron, synapse-by-synapse, and released this video showing how they had gone from mapping a fruit-fly brain in near-perfect detail in 2024, to embodying that brain in simulated fly that, err… acts exactly like a fly. A whole brain emulation. As they put it ‘a qualitative threshold, not an incremental one’:

They claim they are doing this now for mice, with humans explicitly on the list. Brain uploads. If you think about this for a minute, it means that from the fly’s perspective, with its brain fully uploaded it is now living in a simulation.

Its incredible. Fully sci-fi. I’ve been talking about the importance of biocompute, or organoid computing for a while. Whole brain emulation seemed too sci-fi, even for me. But here we are.

At Fujitsu, we asked AI-expert and friend Professor Ken Payne to gather luminaries from across the UK compute ecosystem and write up a report on ‘future compute’. At the time, it seemed to me that both smart companies and smart countries would be investing in what’s next. Good strategy involves, as Michael Porter tells us ‘doing things differently or doing different things’. But this involves risk, and the tendency is to do the same things that everyone else is – or at best to focus your research adjacent to what is already being done. Less chance of embarrassment. This, and traditional sales and reporting cycles, is why, as Jeff Bezos told Wired magazine in 2011:

“If everything you do needs to work on a three-year time horizon, then you’re competing against a lot of people, but if you’re willing to invest on a seven-year time horizon, you’re now competing against a fraction of those people, because very few companies are willing to do that.”

Giving evidence to Parliament on ‘the Future of War’ a few weeks ago, I talked about how a smart UK AI Strategy would invest across a portfolio of alternative architectures and approaches, not just LLMs running on Silicon, observing that this is what China seemed to be doing when I visited with the UN last year. Jesse Norman MP asked me if I had written anything on this – I said yes, and there are references to organoid in this piece for the Special Competitive Studies Programme on the Future Operating Environment. But in fact I haven’t tackled the subject directly. This blog aims to do that sparked by the remarkable announcements of the past week.

Winner-takes-All

My belief is that the AI market is likely a winner-takes-all market. Everything we care about in business and international relations (and much more) is the product of intelligence: effective diplomacy, strategy, operational plans, tactics, decisions, products, services, marketing, and in warfare, weapons – all are the products of intelligence. There will be no upper limit on how much intelligence companies and countries seek in trying to gain an edge over others. It is for that reason that the US Presidential Memo on AI for National Security under President Biden contained clauses allowing for the seizure of AGI models from the private sector (see Faustian Bargain from the blog in Oct 2024) something close to nationalisation. The argument that AGI will likely be nationalised was made explicitly and powerfully this week by Palantir’s Alex Karp:

“If Silicon Valley believes we are going to take away everyone’s white-collar job … and you’re gonna screw the military—if you don’t think that’s gonna lead to nationalization of our technology, you’re retarded…”

If you accept the ‘winner-takes-all’ in AI premise, you will see that the UK (and all nations) can’t afford to come second. In fact, unless you think this is certainly true or certainly false – and how could you? - it isn’t really about a binary acceptance or rejection. It should be a probabilistic judgement, with the risk that it is winner-takes-all hedged with proportionate investment. It won’t be, of course, but we should still try to make the argument in the hope it nudges the needle in politics and public discourse a little bit towards taking this seriously, and then we have to hope like hell that we get lucky and a small movement of the needle proves enough.

LLM Progress: The Need for a Portfolio of Bets

It is likely, in my view, that LLM scaling continues to deliver unprecedented, and increasingly superhuman performance in evermore domains.

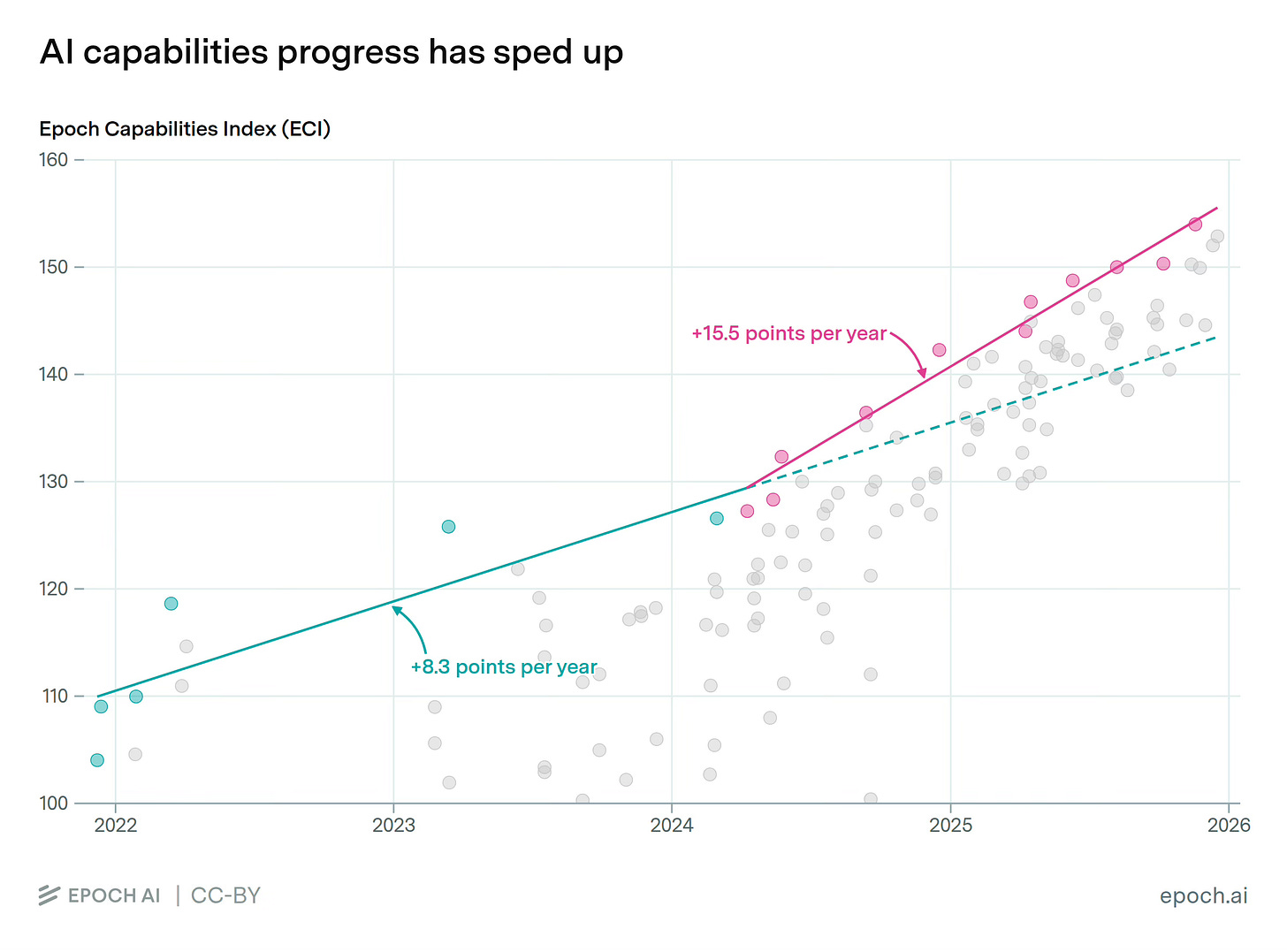

AI capabilities continue to speed up in their development, not slow down:

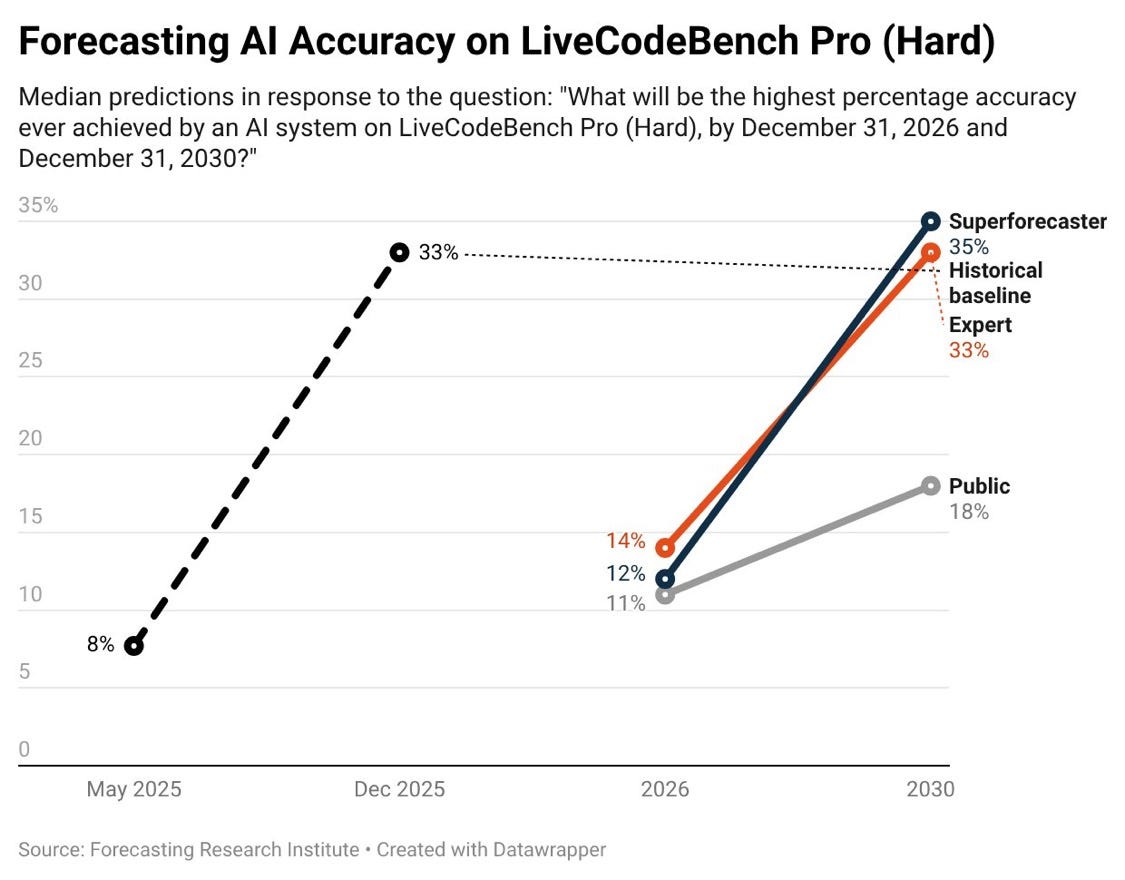

We continue to under-estimate the speed of progress Here experts & superforecasters predicted 14% accuracy in coding tasks in 2026, 33% accuracy by end 2030. GPT 5.2 has already exceeded 33%:

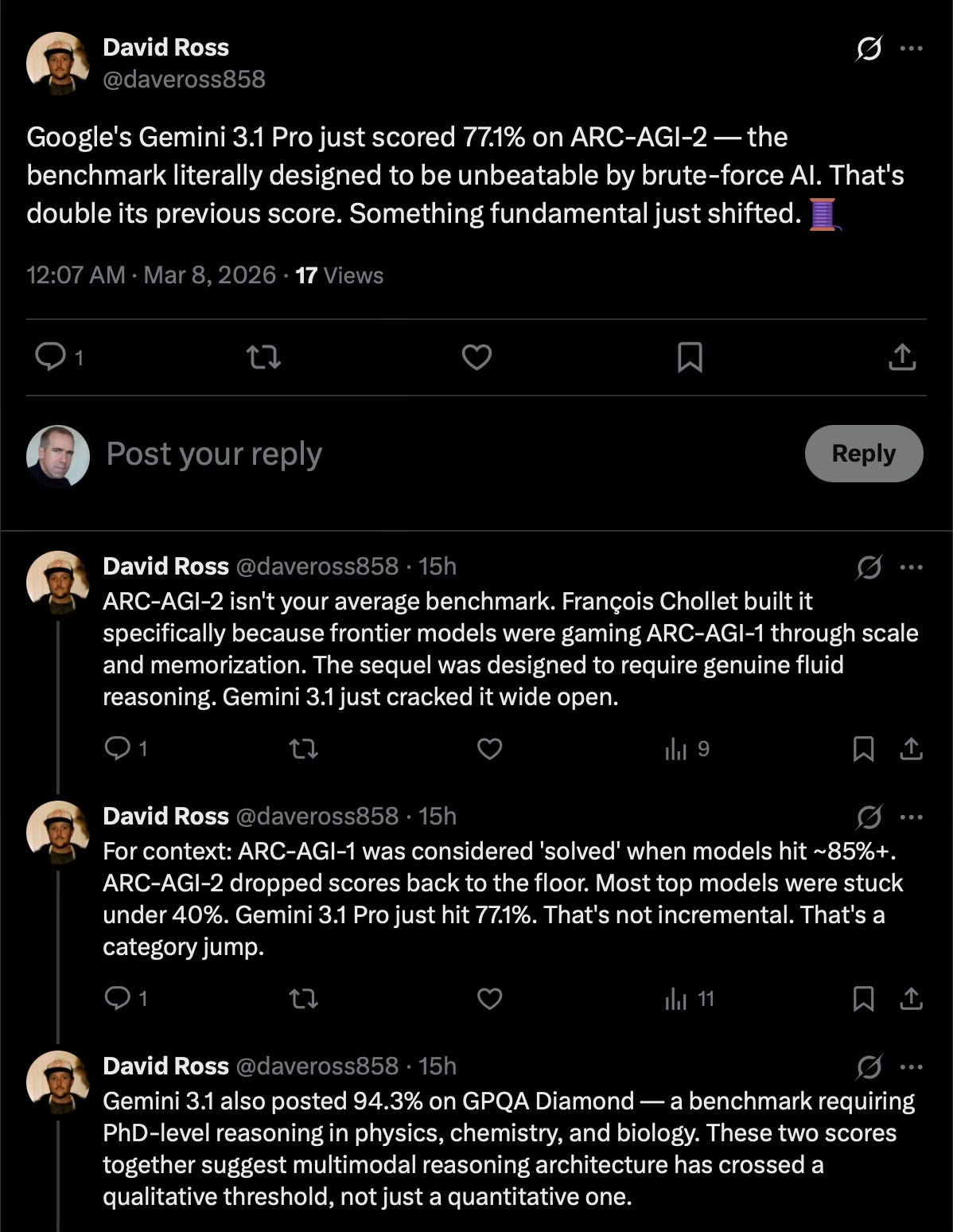

GPT 5.4, released 5thMarch, 3-days before this blog was written, now shows AI matching or outperforming humans on 83% of economically valuable tasks on the GDPval benchmark. And now on ARC AGI-2, the benchmark designed to test models on tasks that weren’t amenable to brute force, Gemini is scoring at 77.1%.

So naturally, we’re now on version-3, ARC-AGI-3 (as noted previously, necessary, but it risks obscuring the progress, the continuous falsification of claims made that ‘AI will never…’).

And the amount of compute coming online dwarfs that available to date, doubling every seven months (I had to go back and read that again):

Suggesting that progress is only going to increase: speed-up, not slow-down.

Last year, Epoch reported that three-quarters of that compute power was in the United States.

My conclusion - the UK is not going to compete by playing around the edges at this. Our planning laws and low-public and private investment in infrastructure, and a massive and growing say-do gap between infrastructure investments that are planned (or at least announced), and what money has actually been found for, plus our sky-high energy costs mean that we are not meaningfully in the race as a pure scaling bet. We won’t have the compute needed.

We are going to have to do things differently, and do different things.

I think most agree. But if that’s true our portfolio bets look under-weighted.

Positives & Progress

There are positives. David Silver leaving Deepmind and raising a $1Bn Seed Round for his London-based start-up, Ineffable Intelligence, which will presumably work on his continuous learning models, as described in the paper he coauthored with Prof Rich Sutton last year ‘Welcome to the Era of Experience’ is one such. At Cassi, we have our plans too. No doubt others do also.

There are similar postives in the AI Action Plan, a particularly energetic Minister for AI in Kanishka Narayan MP, willing to work with former PM Rishi Sunak and others across party lines. And more and more officials, Ministers and MPs coming to see that this has to be taken seriously. Private sector talent joining Government to try to push us on in AI (to all of whom: I salute you - it is not easy, but is vital).

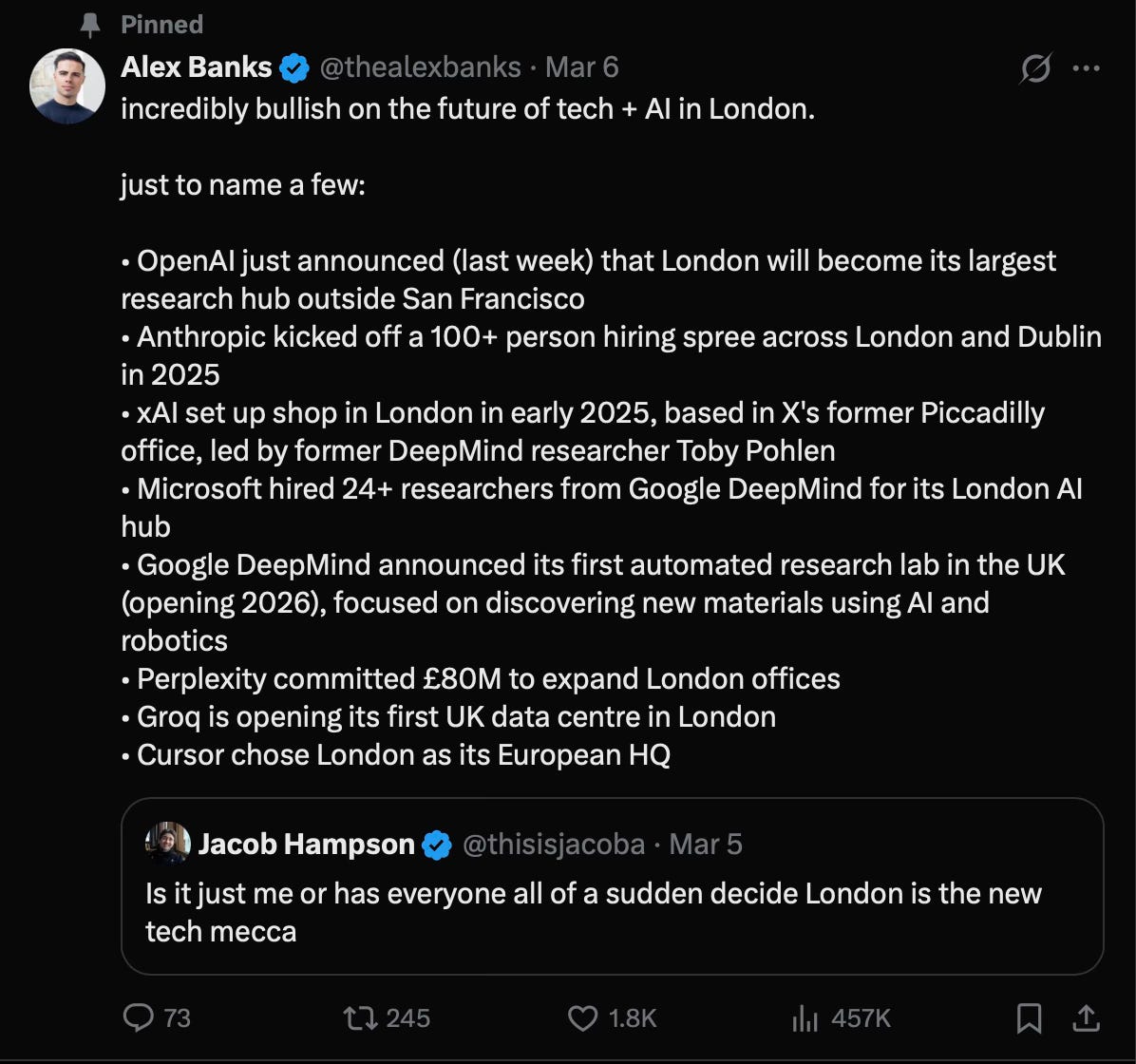

There is an increasing amount of optimism around London’s potential in AI, this from AI commentator Alex Banks at Signal AI (Substack here):

And this, also this week (title says London, but in the article Manchester is celebrated for its potential and progress too):

The Portfolio Case: Organoid and Wetware

Progress should be celebrated, but there is no room for complacency. We need a focus on the alternative architectures that sparked this article. It seems to me these remain under-estimated – both in their likely speed of advance, and their ability to give us leap-ahead advantage. Cortical’s ‘Intelligence-in-a-Dish’ playing Doom makes the case much stronger and harder to ignore.

Cortical are not alone. FinalSpark offer 24/7 remote access to their brain organoid. Two years ago, ‘Brainoware’, developed by scientists in the US, grew a biological organ that resembles the brain, and proved it could undertake basic speech recognition tasks (Nature write-up here).

The lesson here is not that biocompute is a sure thing. It is that what looked like a curiosity (2020), became a research domain ‘Organoid Intelligence’ (2023), and has now become an engineering domain (2025).

Cortical Labs going from Pong (2021) to Doom (2025) has the same feel, to my mind, as DeepMind going from Atari games (2012) to Go (2016) to AlphaStar for StarCraft II (2019) (game theory, imperfect information, long-term planning, real time, large action space - at which point the implications for defence were clear) and then beyond: a line of work treated as a parlour trick for far too long. I remember, while working on the Single Synthetic Environment at Improbable for the MOD, being dismissed back in the headquarters for suggesting progress in games might matter. Even in 2020, in Downing Street, people were critiquing Deepmind for only solving games, and nothing that mattered. I think too many are making this same mistake again with wetware and wider bets.

Alex Wissner-Gross’s ‘first multi-behavior brain upload’ sits slightly outside the wetware discussion because the computation is still running on conventional hardware. But strategically it belongs in the same portfolio. Again, the important thing is the speed at which the pieces are coming together. In 2024 researchers published a connectome-based model of the fruit fly Drosophila brain built from more than 125,000 neurons and 50 million synaptic connections; in parallel, other teams built embodied fly simulators in MuJoCo and NeuroMechFly v2. Wissner-Gross and Eonsys now claim to have combined those strands into a connectome-derived controller driving a perfectly simulated brain that runs in a simulation without knowing it is in a simulation. The direction of travel is as alarming as it is remarkable: the frontier will likely move through architectures copied from nervous systems and trained in closed sensorimotor loops – not just LLM scaling. Our sovereign AI strategy should treat that as part of the same portfolio of bets as biocompute, organoids and neuromorphic systems, and start thinking of it now as an engineering project for commericalisation rather than a research project.

If you think, as I do, that there is a better-than-even chance frontier AI becomes a winner-takes-all market, Britain cannot define sovereignty as access to other people’s models, chips and clouds – we will be forever offered access to those just behind the frontier – unless we have our own edge, a frontier where we push further, and something to offer access to in exchange. Current UK policy still leans heavily towards the incumbent stack: up to £2 billion for public compute by 2030, the lion’s share of funding. While the Compute Roadmap explicitly makes “new computing paradigms” a sovereign AI priority and says AI Research Resource and AI Growth Zones should provide testbeds and routes to scale for British firms. But that insight still sits at the edge of policy, not at the centre, and progress is tectonically slow.

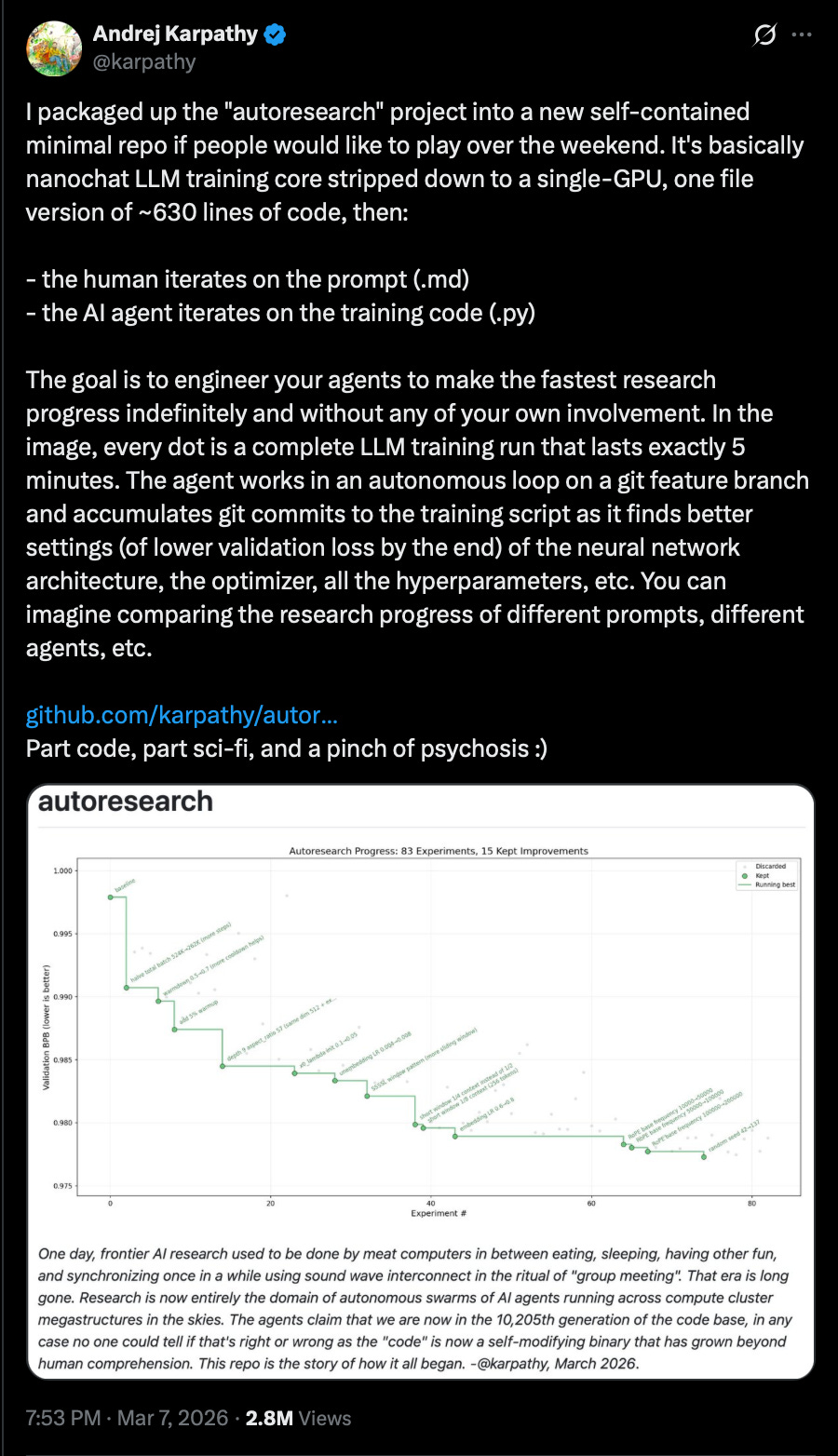

Progress in technological advancement is also likely to be faster this time because the existing stack will help invent its successors. DeepMind’s AlphaChip is already accelerating chip layouts. GNoME has surfaced millions of candidate materials. The same pattern will compress search across quantum computing, photonics, memristors, biological interfaces and lab automation – I’ve written about automated R&D, increasingly a current reality rather than a science fiction future. But I was still surprised to see Andrej Karpathy, drop an OpenSource Github repo that enables “…your agents to make the fastest research progress indefinitely and without any of your own involvement.”

The key point is that AI-for-science compresses cycles. The path from weird demo to engineering platform is likely to be shorter than many assume. Our slow progress is becoming more and more of a problem.

ARIA’s Nature Computes Better programme is a rare execption, and matters to Britain. In Downing Street, I was often told, correctly, that the UK has real strength in synthetic biology and should double down on it rather than try to outspend deeper-pocketed American AI firms on their own ground. Synthetic biology would be our edge. That remains the right instinct. The government’s National Vision for Engineering Biology put £2 billion behind the sector. UKRI now classifies engineering biology as a strategic priority. UK Chief Scientific Advisor Dame Angela MacLean’s 2025 report on engineering biology makes the economic case for engineering biology well, but it is a compute free zone. In it AI is something that might help research in biology, nothing more. I couldn’t find a single .gov document that talks about organoid computing at all (if you can, post a link in the comments). Yet biology and AI are converging. A sovereign AI strategy that ignores biocompute is ignoring one of the few domains where Britain may actually have comparative advantage. But today, at least insofar as I am aware, none of the companies working on this are in the UK.

ARIA deserves real credit for seeing the opportunity. Nature Computes Better and its Scaling Compute programme are genuine portfolio bets: nearly £100 million for Scaling Compute, within which £50 million for the Scaling Inference Lab, and seed bets ranging from Cell Learning for Natural Computing and Embodied Cognition in Single Celled Organisms to probabilistic computing, optical computing and brain-inspired neuromorphic networks. That is the right shape of thinking. What is missing is scale and pull-through. ARIA can seed a field. It cannot, on its own, build a national position in one.

Particularly now when, at the same time, the House of Lords Science & Technology Committee has been warning (this time last year) that the UK risked squandering its advantage in engineering biology, while in November, a collaborative paper from the Tony Blair Institute, sponsored by Tony Blair and William Hague, warned that ‘…the country risks failing to convert its leadership in quantum research into commercial scale and strategic value, which depends on quantum companies thriving and scaling at home’. Our portfolio bets seen to be reducing, at precisely the time progress is speeding up and we should be doubling down.

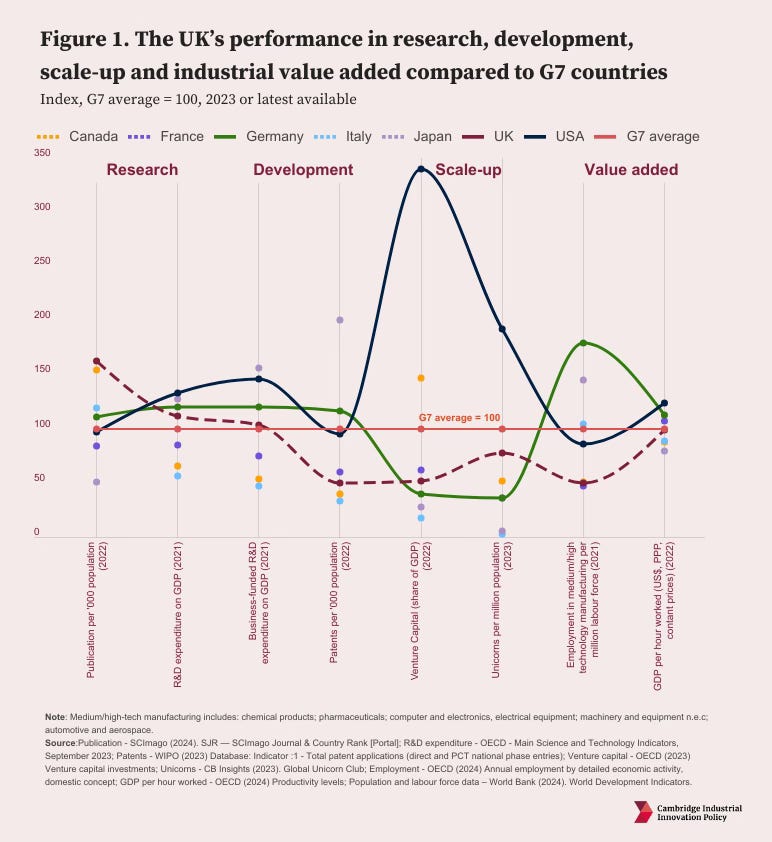

The UK continues to under-invest in R&D relative to other advanced economies. R&D tax relief offsets this to some extent, and allows the headline figures for Government R&D support to look better. But given how poorly the UK continues to do in commercialising research, as the chart1 shows (red dotted line is the UK), it does not seem to be an offset that is effective:

If the measure is not ‘relative to other countries’ but relative to the risk/threat and opportunity AI poses, it is hard not to conclude that we are hoping for a miracle rather than planning to succeed in winning this race.

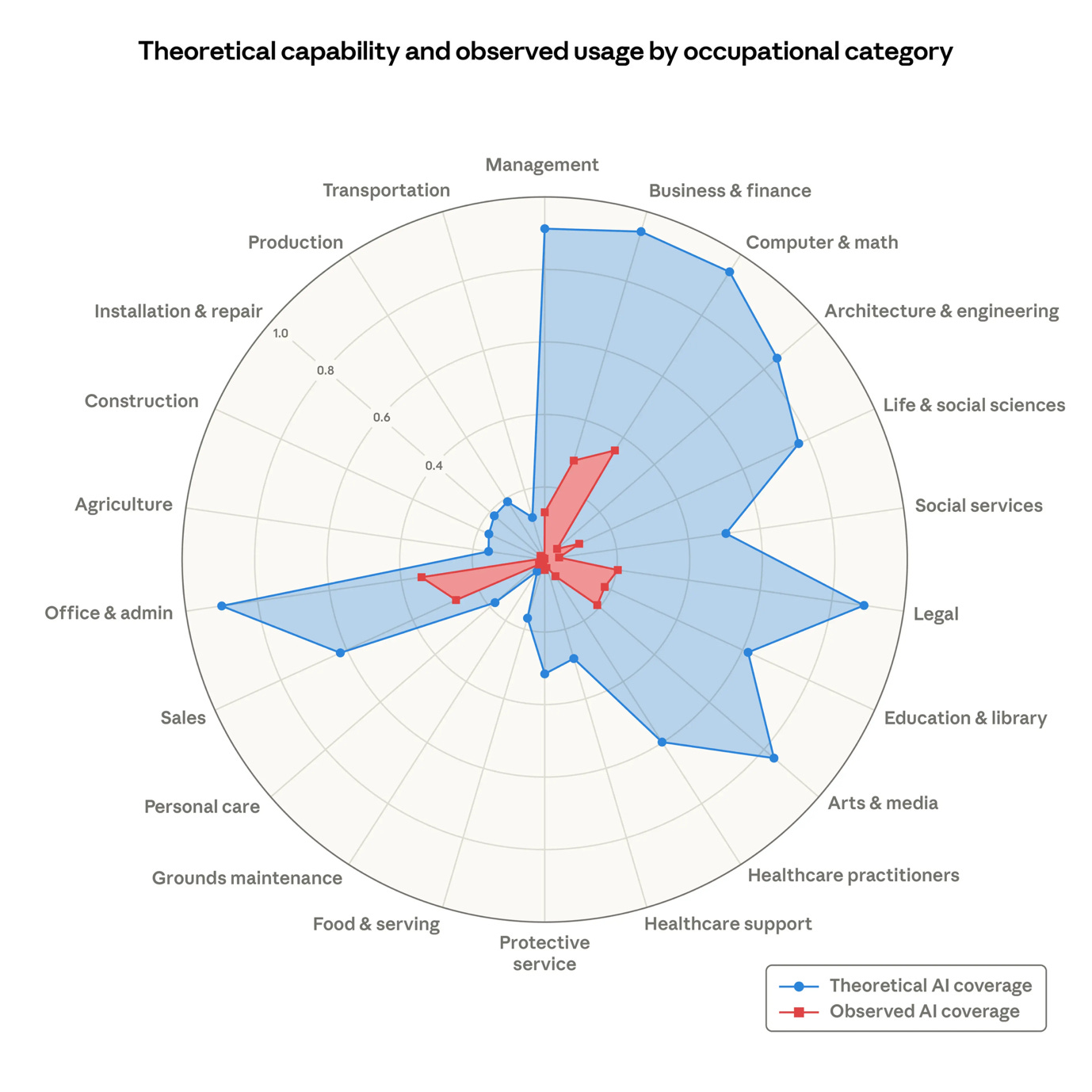

To get a sense of that risk/threat and opportunity, take a look at this from Anthropic, this week, on the exposure of the labour market to automation:

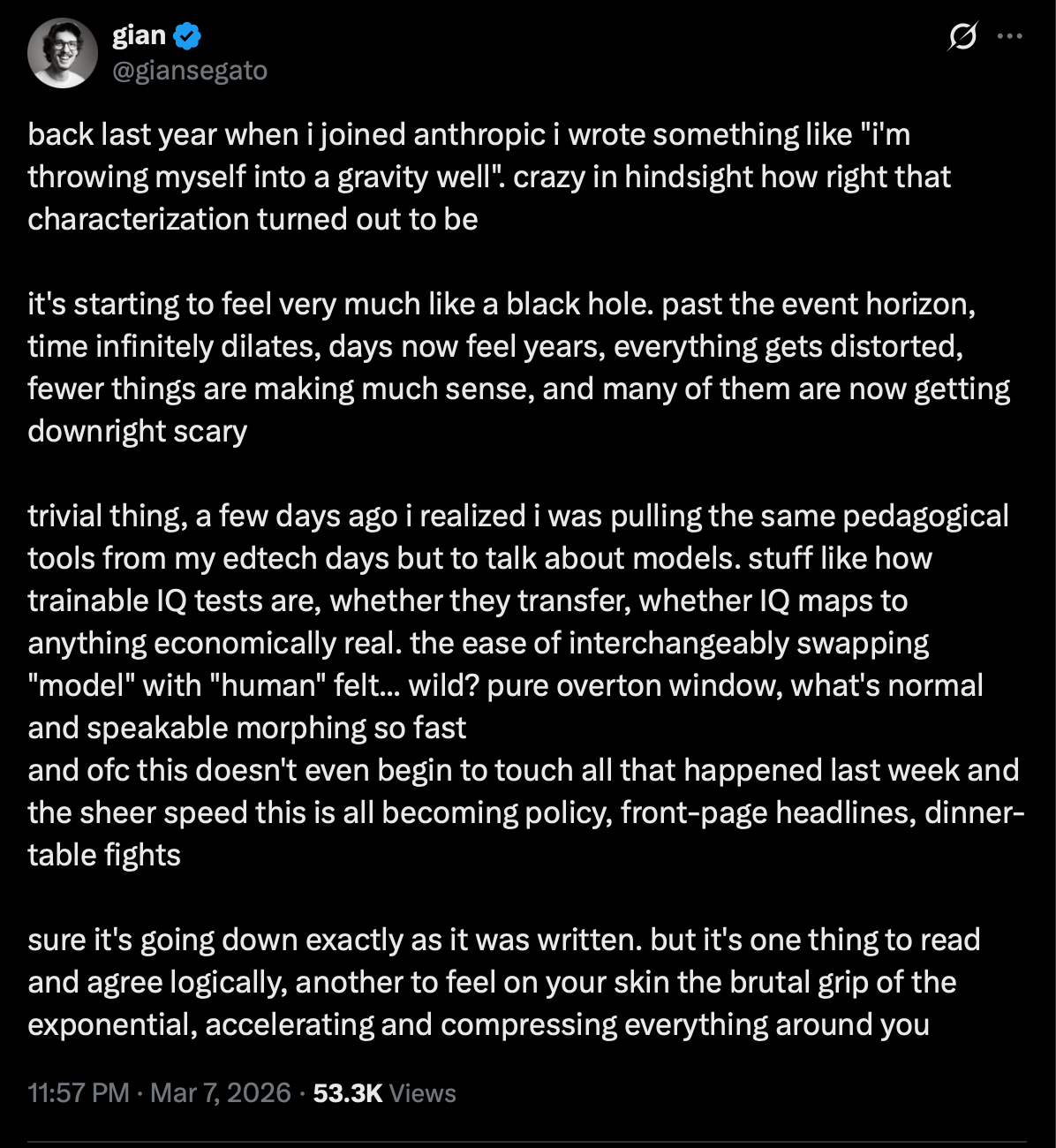

I said recently (in our Cassi Cygentic launch video: here) that the exponential was no longer theoretical: we are now starting to feel it. I think this is true and becoming more so. Hairs-on-back-of-neck-standing-up intense. I think it is summed up well here by data scientist and engineer at Anthropic, Gian Segato:

As a country, we can’t afford to not broaden and deepen our bets across the intelligence portfolio. Yes, there is progress and there are positives to celebrate. But the frontier moves ever faster, and we don’t. Incrementalism and announceables won’t be enough. Brains in a dish playing Doom are just the beginning.

And if that doesn’t stop you in your tracks, I don’t know what will.

Visit us at www.cassi-ai.com

Keith, remarkable week to be tracking this space. The Cortical Labs demo alone is worth stopping to absorb.

The portfolio argument resonates strongly with me, and the UK's position makes it especially urgent. You/we (I am still a citizen!) can't out-compute the US or China on their own terms, so doing things differently isn't just good strategy, it's the only viable one. The ARIA work and the engineering biology thread are exactly the right instincts.

My only observation, and take it under advisement please: I suggest you try to avoid the risk that biocompute excitement repeats the same architectural mistake as LLM scaling, assuming more biological substrate, more emergent complexity, automatically produces better intelligence.

Swarm systems are one of nature's most impressive achievements. But emergence without governance isn't intelligence, it's complexity. Ant colonies build sophisticated structures, wage wars, farm fungi. No individual ant understands any of it. There's no judgment, no executive function catching when the system optimizes for the wrong thing.

The question for the portfolio isn't just "which substrates?" It's "which architectures preserve human judgment as the governing layer?" Because the real risk isn't that these systems become too intelligent, it's that we're building increasingly capable systems that systematically remove human oversight at exactly the moment they become capable of real-world action.

That design principle matters as much for organoids and wetware as it does for LLMs.

More on this in my Substack next week.

PS - Doom was my absolute favorite, my eldest is starting work in bio-research is super organoid curious, and Charlie says hi!